Localization teams that have run large-scale MTPE programs for years know the exact moment the skepticism hits. A stakeholder reviews the first batch of post-edited content and asks, “This came from a machine? It reads too smooth—did the editor actually rewrite everything?” The doubt is understandable. Too many early MTPE projects earned a reputation for “good enough” rather than “excellent,” leaving buyers wondering whether post-editing is little more than a quick typo sweep.

The truth is more nuanced. When a mature linguistic quality assurance (LQA) framework sits at the center of the workflow, MTPE doesn’t just match human translation quality—it often exceeds it in consistency and scalability. The secret lies in treating quality as an engineered outcome, not an afterthought. That means building three interlocking systems: a meticulously maintained terminology database, a living style guide, and a multi-stage LQA process that catches issues long before they reach the client.

Why Terminology Databases Make or Break MTPE

Machine translation engines are only as good as the data they’re fed. A clean, client-specific terminology database is the single biggest lever for lifting output quality before a single word reaches a post-editor.

The process starts with corpus cleaning. Project managers pull every past translation memory and raw source file, then run automated scripts to remove duplicates, fix alignment errors, and flag segments with low fuzzy-match confidence or contradictory translations. In practice, this step alone can eliminate 15–25% of noisy data that would otherwise poison the engine. Domain experts then validate extracted terms against approved glossaries, marking preferred terms, forbidden variants, and context-specific rules (for example, distinguishing “bank” as financial institution versus riverbank in a fintech app).

Once loaded into the translation management system, these terms become hard constraints. Modern engines can enforce them at runtime, dramatically reducing the cognitive load on post-editors. One manufacturing client I worked with saw terminology compliance jump from 68% to 94% after a single round of glossary optimization—cutting post-editing time by nearly 40% on subsequent batches.

Style Guides: The Invisible Hand That Keeps Voice Consistent

Even the best terminology database can’t dictate tone, formality level, or brand personality. That’s where the style guide becomes indispensable.

A strong guide isn’t a dusty PDF—it’s a living document embedded directly into the CAT tool. It spells out everything from preferred sentence length and punctuation conventions to how to handle cultural references, gender-neutral language, and region-specific spelling (British vs. American English, for example). For marketing content it might mandate a warm, conversational tone; for technical documentation it demands precision and zero ambiguity.

Post-editors reference the guide in real time through inline prompts or automated QA checks. When multiple linguists work in parallel, the style guide becomes the single source of truth that prevents “style drift”—the subtle inconsistencies that make a document feel patched together rather than authored.

In one enterprise software rollout spanning 18 languages, enforcing a detailed style guide reduced style-related LQA errors by 62% between the first and second content waves. The numbers weren’t surprising to anyone who has watched unguided MTPE projects spiral into endless revision cycles.

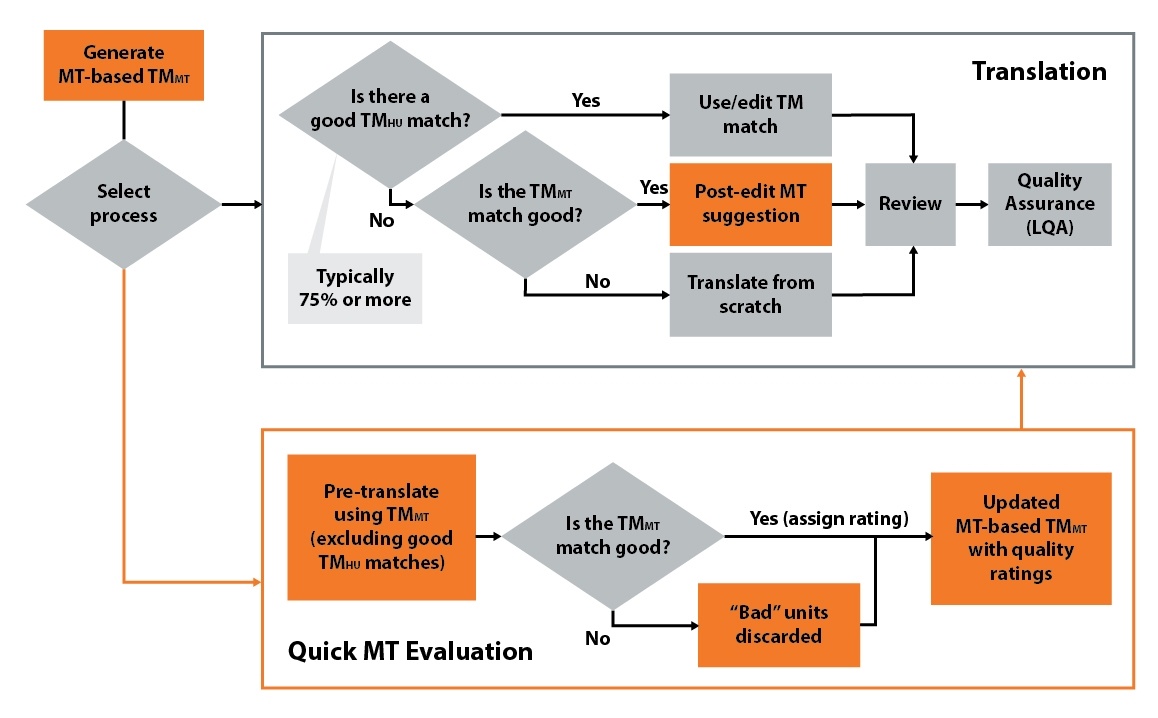

Multi-Layer LQA: The Quality Gate That Actually Works

Here’s where MTPE separates itself from “machine output with light fixes.” Rigorous LQA operates in layers, each catching different classes of issues.

Automated Pre-ChecksThe TMS runs spelling, grammar, and terminology compliance scans the moment post-editing finishes. Any red flags route the file back automatically.

Error-Typology Sampling (MQM-Based)Reviewers don’t read every word. Instead, they use a standardized error typology—accuracy, fluency, terminology, style, locale convention—to score statistically valid samples. Severity levels (critical, major, minor) determine whether the batch passes or requires rework. This method has proven far more reliable than subjective “feels good” reviews.

Final Holistic Review + Client Spot-CheckA senior linguist performs a full read-through on high-visibility content, while the client receives a small validated sample for final sign-off.

The table below shows a simplified version of the error categories most teams track:

| Error Category | Description | Severity Impact on MTPE Workflow | Typical Fix Rate with Strong LQA |

|---|---|---|---|

| Accuracy | Mistranslation, omission, addition | High – blocks approval | 85–92% on first pass |

| Fluency | Grammar, syntax, unnatural phrasing | Medium – affects readability | 78–88% |

| Terminology | Wrong term or inconsistent usage | High – brand risk | 90%+ with enforced glossary |

| Style & Tone | Deviates from brand voice or guide | Medium – consistency issue | 70–82% with active style guide |

| Locale Convention | Date formats, currency, units | Low–Medium – market-specific | 95%+ with automation |

Teams that implement this layered approach routinely achieve 95%+ first-pass acceptance rates on informational content—numbers that rival or beat full human translation when measured against the same error typology.

The Bottom-Line Proof

Industry data backs the approach. According to the latest Nimdzi Insights, MTPE adoption jumped from 26% of projects in 2022 to 46% in 2024—a 75% increase—precisely because organizations discovered that well-governed post-editing delivers the quality they need at scale. When LQA is rigorous, the cost-per-word savings (often 30–50%) come without the usual quality trade-offs.

The real win, though, is trust. Clients stop second-guessing every delivery. Internal teams stop treating localization as a bottleneck. And the entire program scales to handle the exploding volume of content that modern businesses generate.

At Artlangs Translation, this exact combination of clean terminology foundations, living style guides, and multi-layer LQA has been refined over years of delivering translation services, video localization, short-drama subtitle localization, game localization, multi-language audiobook dubbing, and large-scale data annotation transcription across more than 230 language pairs. The track record of enterprise cases proves the point: when quality systems are this disciplined, MTPE stops being “the cheaper option” and becomes the smarter one.