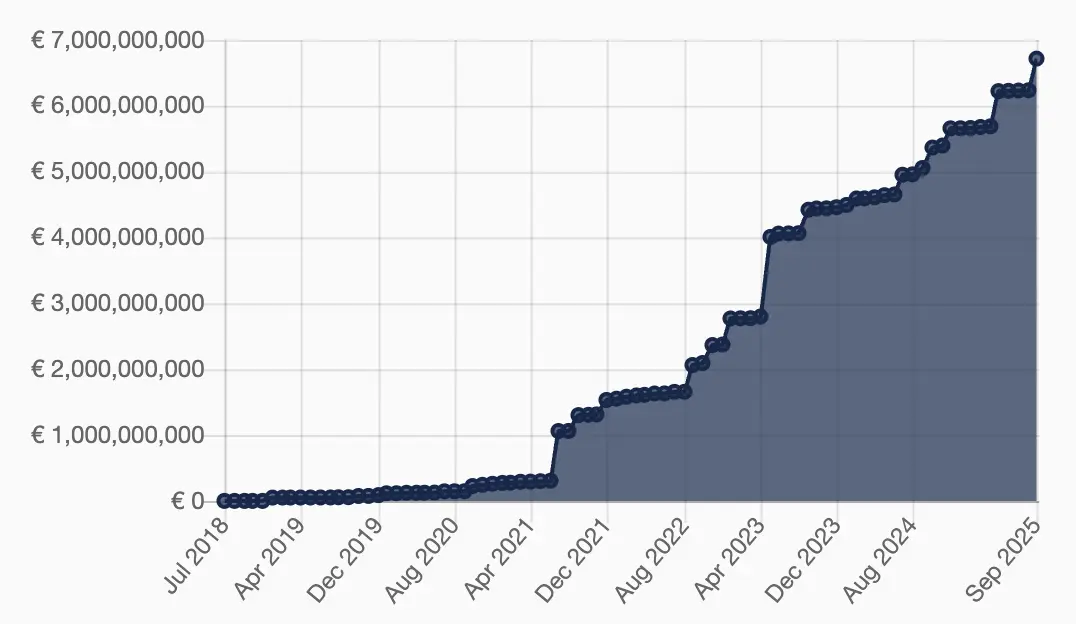

European enterprises handling patents, clinical trial reports, or regulated financial documents know the stakes. One misplaced file in a public AI model can trigger a GDPR breach notification, months of regulatory scrutiny, and fines that now routinely hit seven or eight figures. With cumulative GDPR penalties exceeding €7.1 billion since 2018 and another €1.2 billion issued in 2025 alone, localization teams can no longer treat data security as an afterthought.

The fear is real and justified. Generative AI data leaks have become the top security concern for organizations entering 2026, cited by 34% of leaders—up sharply from 22% the year before. Public models like ChatGPT log prompts, retain training data potential, and operate outside your direct control. For any company processing personal data under GDPR, that setup is no longer viable.

The practical answer lies in a fully private MTPE workflow: enterprise-grade machine translation that never leaves your infrastructure, followed by controlled human post-editing under strict governance. Below is the exact architecture and process that compliance-focused localization teams have implemented to stay both fast and lawful.

Why Public MT Platforms Create Unacceptable Risk

When source content contains names, addresses, health records, or contract clauses, uploading it to a consumer-facing large language model means handing personal data to a third-party processor without a Data Processing Agreement that meets Article 28 standards. Even “no-training” promises from some providers don’t eliminate logging, caching, or future model-improvement risks. The result? Mandatory breach notification within 72 hours, potential fines up to 4% of global turnover, and the very real possibility of supervisory authority audits.

Private deployment eliminates that vector entirely. The translation engine lives inside your VPC, on-premise servers, or a dedicated private cloud instance. No data ever crosses the public internet in raw form, and no vendor can access or reuse it.

Core Architecture: Private Machine Translation Deployment

Start with a self-hosted neural MT engine. Leading options include fine-tuned open-source models (NLLB, Seamless, or custom transformers) or commercial on-premise solutions that run entirely within your security perimeter. The setup typically follows this layered model:

Infrastructure layer: Bare-metal servers, Kubernetes clusters, or isolated VPC subnets with encryption at rest (AES-256) and in transit (TLS 1.3).

Model layer: Domain-adapted engines trained on your approved terminology and parallel corpora. Client data is never used for further training.

Integration layer: Secure API endpoints behind WAF, IP whitelisting, and mutual TLS authentication. Translation requests are routed through your internal CAT tool or TMS.

This architecture satisfies GDPR’s integrity-and-confidentiality principle (Article 5(1)(f)) because the controller retains full technical and organizational control.

Securing the Human Post-Editing Stage

The real compliance work happens once the machine output reaches the linguist. Here’s how mature programs lock it down:

Data Desensitization Before Editing

Automated PII detection scans for names, emails, IDs, and health data.

Irreversible techniques (hashing, tokenization with vaulted mapping) replace sensitive fields.

Reversible pseudonymization is used only when the editor needs context, with keys stored separately under dual control.

Role-Based Permission Management

Least-privilege access: Post-editors see only the segments assigned to them.

Granular RBAC in the TMS prevents any single user from exporting full documents.

Session timeouts, audit logging of every edit, and immutable logs feed directly into your SIEM system.

Controlled Editing Environment

Web-based CAT tools deployed in your private instance (no browser extensions phoning home).

Screen-recording disabled by policy and technical controls.

All changes versioned with timestamps, editor IDs, and rationale fields for audit readiness.

These steps turn post-editing from a potential weak link into a documented, auditable control point.

Full Compliance Workflow in Practice

Here’s the end-to-end sequence that keeps everything GDPR-compliant:

| Stage | Action | GDPR Control Achieved |

|---|---|---|

| Intake | Classify content sensitivity; apply desensitization | Data minimization (Art. 5(1)(c)) |

| Pre-translation | Route to private MT engine only | Lawful processing & security |

| Post-editing | RBAC-restricted access + real-time audit logging | Accountability & integrity |

| QA & Delivery | Re-identification of pseudonymized fields (if needed) under dual control | Purpose limitation |

| Archiving | Encrypted storage with retention policy and deletion schedule | Storage limitation |

Teams following this model routinely pass DPA audits because every step produces verifiable evidence of control.

Measuring the Real-World Impact

Beyond avoiding fines, the operational upside is measurable. Private MTPE workflows cut turnaround time by 50–70% compared with full human translation while maintaining the audit trail regulators demand. More importantly, they let legal, compliance, and localization teams speak the same language instead of fighting over risk.

The organizations that have invested in these private pipelines treat data security not as a checkbox but as a competitive advantage—especially when entering highly regulated European markets. They localize faster, sleep better at night, and keep full sovereignty over their most sensitive intellectual property and customer information.

At Artlangs Translation, teams have spent years refining exactly these workflows across more than 230 language pairs. From video localization and short-drama subtitle adaptation to game localization, multi-language audiobook dubbing, and large-scale data annotation transcription, the focus has always been on combining speed with iron-clad governance. The result is a track record of enterprise clients who meet GDPR requirements without sacrificing scale or quality. When your next localization project involves regulated content, the difference between hoping for compliance and engineering it is everything.