Empowering Machines with Human Emotions: How to Collect High-Quality Voice Cloning Data for Next-Generation Emotional TTS Large Models?

Flat, robotic voices still plague too many apps, videos, and digital assistants. Users click away the moment a synthetic narrator sounds like it’s reading a grocery list instead of delivering real feeling. The fix isn’t better algorithms alone—it starts with the raw material: carefully collected voice data that captures every nuance of human emotion and pacing. Done right, this TTS voice data collection process turns lifeless synthesis into voices that laugh, console, or fire up listeners exactly like a real person would.

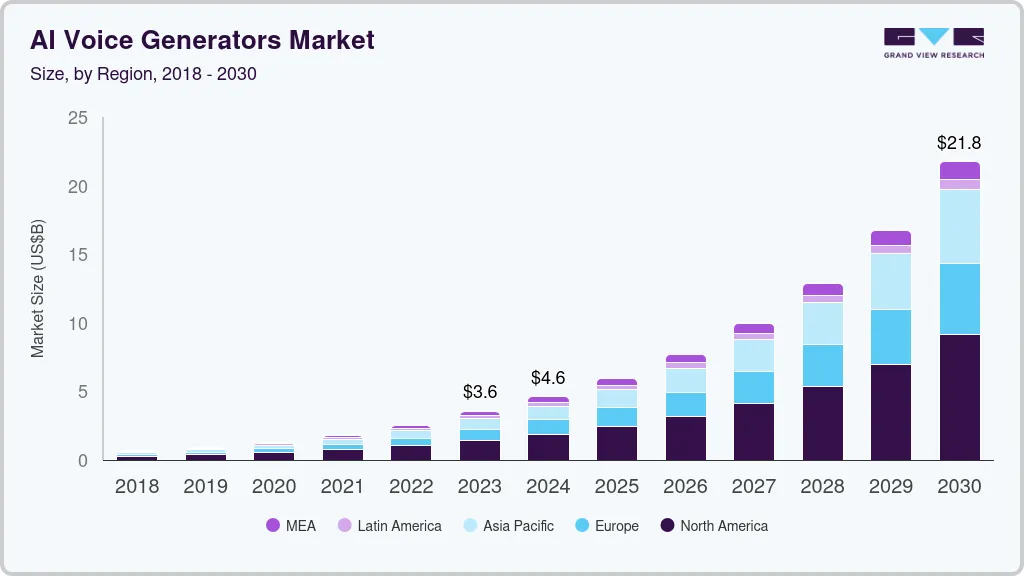

The numbers tell the story. The AI voice generator market stood at roughly $4.16 billion in 2025 and is on track to hit $20.71 billion by 2031, growing at a blistering 30.7% CAGR. Neural TTS engines already command nearly half the share because brands and developers finally understand one thing: users won’t engage with emotionless speech. Over 4.2 billion voice assistants worldwide are driving that demand, and the winners will be those whose voices actually feel alive.

That growth isn’t happening by accident. It’s happening because high-quality emotional datasets let models learn prosody—the natural rise and fall of pitch, the pauses that carry meaning, the tiny changes in speed and volume that signal joy, sorrow, or urgency. Without them, even the most advanced large models fall flat.

The Non-Negotiable Recording Environment for Professional TTS Voice Data Collection

You cannot train convincing emotional TTS on bedroom recordings or compressed phone audio. The studio itself becomes part of the data quality equation.

Start with a dedicated voice booth and separate control room. Both must be treated with acoustic panels, bass traps, and diffusers to kill reverberation and external noise down to a noise floor below –60 dB. HVAC systems get shut off or rerouted; windows are double-glazed or sealed. Professional teams routinely measure the room with RTA software before every session to confirm consistent acoustics.

Microphone choice matters just as much. A large-diaphragm cardioid condenser (think Neumann TLM 103 or equivalent) paired with a high-end audio interface (UAD Apollo or similar) captures the full dynamic range. Record at 48 kHz / 24-bit minimum—some pipelines now push 96 kHz for even finer detail in breath and micro-variations. Pop filters, shock mounts, and careful mic placement 6–8 inches from the mouth prevent plosives and rumble while preserving natural timbre.

Headphones in the booth stay closed-back and latency-free so the talent hears only their own clean feed. Every cable is high-grade, every gain stage is optimized, and raw WAV files are backed up instantly. These aren’t nice-to-haves; they are the baseline that separates data suitable for next-gen emotional models from data that gets discarded during training.

Directing Talent: Turning Emotion and Pace into Usable Corpus

The studio is ready. Now the real craft begins—coaxing authentic performances out of voice actors so the dataset contains genuine emotional range instead of acted “sad voice” caricatures.

Preparation is everything. Give actors detailed scene briefs, not just lines. For a joy segment: “You just won the lottery and you’re calling your best friend—energy high, laughter bubbling.” For sadness: “You’re reading a farewell letter you never sent—voice soft, pauses heavy.” Anger scripts get context like “Your flight was canceled for the third time—frustration building.”

Warm-ups matter. Breathing exercises, facial expression mirrors, and quick improvisation help actors drop into the emotion rather than force it. Record multiple takes of the same script at three deliberate speech rates:

• Slow (70–90 wpm) – perfect for reflective sadness or serious narration • Medium (140–160 wpm) – natural default for most dialogue • Fast (180+ wpm) – ideal for excitement, urgency, or heated arguments

Vary volume and pitch deliberately too. Joy often lifts pitch and adds melodic variation; anger sharpens consonants and raises intensity; sadness lowers both pitch and energy while lengthening pauses. Capture at least 2–3 hours of clean audio per speaker, spread across emotions and tempos, to give models enough variety to generalize.

After every session, immediate annotation tags each clip: primary emotion, intensity (1–5 scale), speech rate category, and any background notes on breathing or micro-expressions. This metadata becomes gold when training the model to control emotion on demand.

Turning Raw Recordings into a Training-Ready Dataset

Diversity strengthens everything. Include speakers across age groups, genders, regional accents, and native languages if you plan multilingual deployment. Balance scripted and semi-improvised segments so the model learns both controlled prosody and natural spontaneity. Run automated quality checks for clipping, noise, and alignment, then have human reviewers sign off on emotional authenticity—machines still miss subtle cues that trained ears catch instantly.

The payoff is measurable. Models trained on emotionally rich, high-fidelity data consistently score higher on mean opinion scores for naturalness and expressiveness. They stop sounding like robots reading scripts and start sounding like companions who understand the weight behind every word.

From Dataset to Digital Human Voices That Actually Connect

When the collection process is this deliberate, the resulting TTS voices don’t just speak—they resonate. Digital humans in games deliver lines with real tension. Audiobook listeners stay immersed for hours. Short dramas dubbed across borders keep cultural emotion intact. Virtual assistants finally comfort users instead of confusing them.

Achieving that level of quality at scale takes more than good intentions and a quiet room. It takes teams who have spent years perfecting multilingual voice workflows, from precise data annotation and transcription to full localization pipelines.

That’s where specialized partners come in. Artlangs Translation brings exactly this depth—mastery of 230+ languages, proven delivery in video localization, short drama subtitle and dubbing work, game localization, audiobook multi-language narration, and large-scale data annotation projects. Their casebook of successful voice cloning and emotional TTS deployments gives organizations the shortcut from raw studio sessions to production-ready, emotionally intelligent voice models that actually move people. The machines don’t have feelings yet, but thanks to data collected the right way, they’re finally learning how to sound like they do.