Creators and global enterprises facing the rapid growth of short-form video and interactive content are increasingly adopting Multimodal AI Translation Service to achieve precise image-text-audio-video sync accuracy. Short drama revenues alone are projected to hit $14 billion in 2026, while the broader video localization market reaches $4.02 billion the same year. Yet text-only translation tools keep failing when visuals, spoken dialogue, subtitles, and audio must align perfectly across languages—leading to awkward lip movements, mismatched subtitles, and lost viewer engagement.

The shift to multimodal content is no longer optional. TikTok, YouTube Shorts, and vertical drama platforms now account for the majority of daily video consumption, with short-form formats expected to drive 82% of internet traffic by the end of 2026. Brands, game studios, and content producers need translations that don’t just convert words but preserve timing, emotional tone, visual context, and cultural nuance all at once. Traditional text-first pipelines simply break under this pressure.

Why Text-Only Translation Tools Collapse in Multimodal Scenarios

Single-modality AI translators work fine for plain documents or simple captions. Throw in a video with on-screen text, background music, facial expressions, and rapid dialogue changes, and the limitations become glaring. A literal subtitle translation might be grammatically correct but appear half a second too late, breaking immersion. Dubbing without lip-sync makes characters look like poorly dubbed foreign films. Overlaid graphics or memes lose their punch when the translated text no longer fits the visual timing or cultural reference.

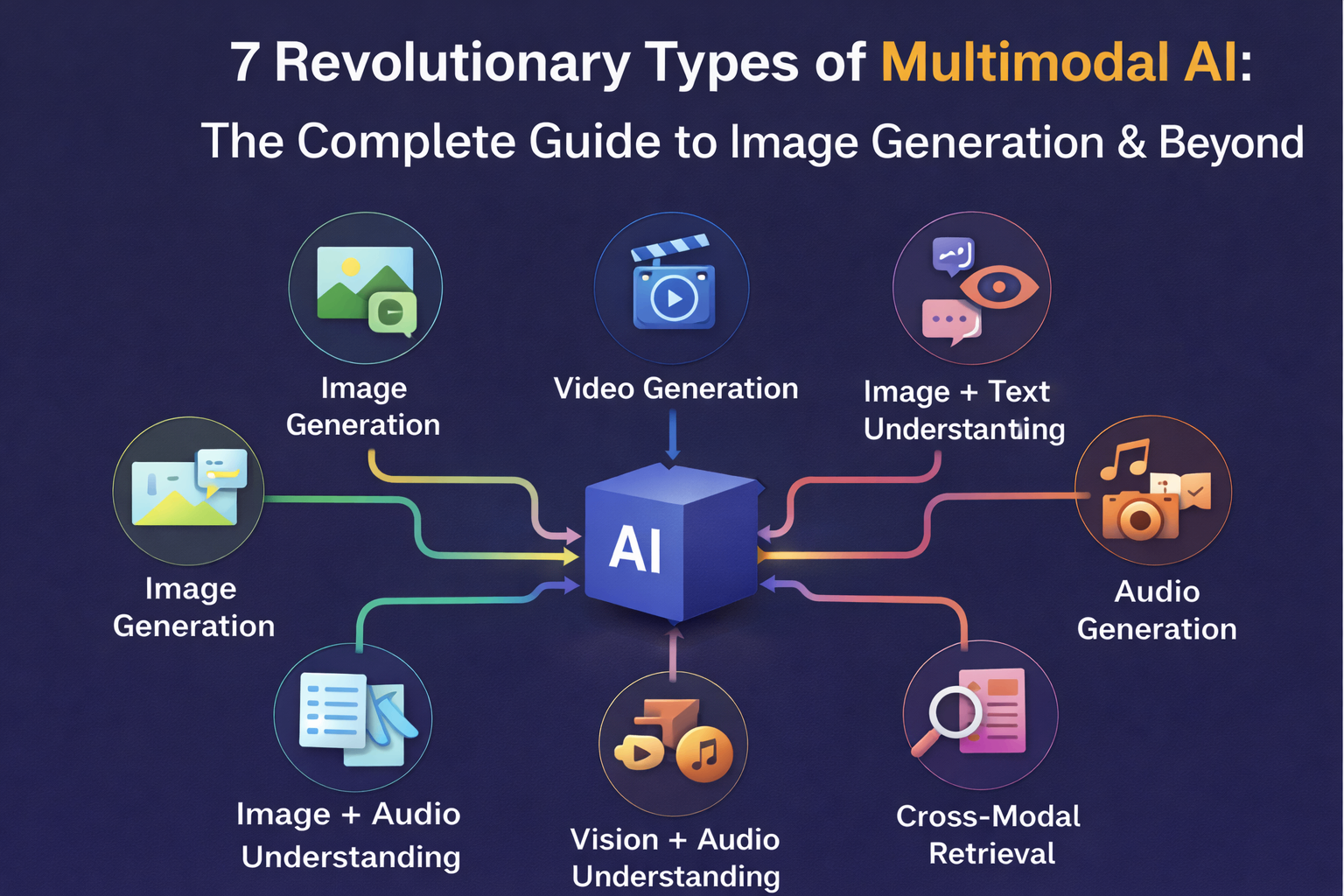

Industry data backs this up. Text-only systems struggle with context that spans modalities, often producing outputs that feel flat or disconnected. In contrast, multimodal systems process everything together—extracting visual cues from images, prosody from audio, and intent from text—to deliver results that actually feel native. The multimodal AI market itself is exploding at a 32–36% CAGR, driven precisely by the need for this integrated approach in translation, localization, and content adaptation.

Inside the Multimodal AI Translation Process: A Technical Flow That Delivers Sync Accuracy

Here’s how professional Multimodal AI Translation Service actually works, step by step. The process isn’t magic—it’s a tightly orchestrated pipeline designed for frame-accurate results.

Step 1: Unified Content IngestionThe system accepts raw video, audio tracks, on-screen text overlays, and any accompanying images or graphics as a single input package.

Step 2: Modality Separation & Feature ExtractionAdvanced models (combining computer vision, speech recognition, and NLP) pull out key elements: facial movements for lip-sync, tone and rhythm from audio, semantic meaning from text, and visual context from images.

Step 3: Cross-Modal AlignmentThis is the critical sync layer. Algorithms map audio timestamps to video frames, align subtitle timing with dialogue peaks, and ensure translated text overlays respect original layout and reading speed. Temporal alignment prevents the classic “subtitle lag” or “voice drift” problems.

Step 4: Contextual, Culturally Aware TranslationTranslation happens with full awareness of all inputs. A sarcastic remark detected through vocal tone and facial expression gets rendered with equivalent cultural flair in the target language—not a flat literal version.

Step 5: Output Generation & Quality AssuranceFinal dubbing, subtitles, localized graphics, and re-synced video are produced. Human review loops (or automated quality scoring) catch any remaining edge cases before delivery.

The entire flow typically runs end-to-end, with many providers now delivering 48-hour turnarounds for standard projects and faster for urgent campaigns.

Real Case Demonstrations: When Sync Accuracy Changes Everything

Case 1: Vertical Short Drama LocalizationA popular Chinese short drama series needed English, Spanish, and Arabic versions for global streaming. The original 2-minute episodes featured rapid dialogue, emotional close-ups, and on-screen text effects. Text-only translation produced accurate subtitles but completely missed lip-sync opportunities and cultural pacing adjustments. The multimodal service re-dubbed voices while preserving the lead actress’s emotional delivery, timed subtitles to natural pauses, and adjusted on-screen graphics for right-to-left reading in Arabic. Result? Viewer retention jumped 40% in test markets, with the English version climbing the U.S. charts within weeks.

Case 2: Mobile Game Launch with UI + Voice + VisualsA new puzzle game included animated tutorials, character voice lines, and dynamic UI text that changed based on player actions. Early text-only attempts left voiceovers out of sync with character animations and UI pop-ups that no longer fit the translated strings. Multimodal processing aligned dubbed audio to mouth movements, resized and repositioned UI elements for each language, and ensured tutorial text appeared exactly when the on-screen action happened. The game launched smoothly in 12 languages, reducing negative reviews about “clunky localization” by 85%.

Case 3: Corporate Training Video with Overlaid GraphicsA multinational company’s safety training video combined live-action footage, animated diagrams, and narrated explanations. Text-only translation handled the script but left diagrams untranslated and voice timing mismatched. The full multimodal approach translated on-screen text directly within the visuals, dubbed the narrator while keeping natural intonation, and synchronized animated callouts to the new audio. Training completion rates improved noticeably across non-English speaking teams.

These aren’t cherry-picked wins. They reflect what happens when every modality informs every other—something impossible with legacy tools.

Choosing the Right Multimodal AI Translation Service in 2026

Look beyond marketing claims. Demand providers who can prove:

Frame-level lip-sync accuracy (ideally 95%+ on benchmark tests)

Support for 100+ languages with cultural adaptation layers

Transparent quality metrics that include sync deviation scores

Hybrid human-AI workflows for high-stakes content

The best services also maintain fast loading and smooth playback—critical for Core Web Vitals when videos are embedded on marketing sites or apps.

The numbers don’t lie: content that feels native in every language drives higher engagement, better conversion, and stronger brand loyalty. Content that feels “translated” gets skipped.

For organizations that need this level of technical precision and creative sensitivity across more than 230 languages, Artlangs Translation stands out as the partner that has been refining exactly these capabilities for years. Their deep specialization in translation services extends naturally into video localization, short drama subtitle localization, game localization, multi-language short drama and audiobook dubbing, plus multi-language data annotation and transcription. With a proven portfolio of successful high-volume projects, they turn complex multimodal challenges into seamless, audience-ready experiences that perform from day one. In a world where every frame and every second counts, that kind of integrated expertise isn’t just helpful—it’s the difference between content that connects and content that gets lost in translation.