High-quality training data decides whether an AI system delivers consistent, trustworthy performance or quietly fails in real-world use. For B2B tech teams deploying models across global markets, the weakest link often sits in the translation layer. A single mistranslated term, lost cultural nuance, or context drift can trigger bias in sentiment analysis, hallucinations in customer-support chatbots, or outright poor accuracy in non-English queries. These issues don’t stay hidden in labs—they surface as lost revenue, compliance headaches, and eroded user trust.

Linguistic accuracy directly shapes model behavior. Recent research on textual datasets shows machine learning models tolerate error rates under 10 % with almost no drop in feature representation or predictive power. Once errors reach or exceed 10 %, performance declines sharply: ROC-AUC scores for tasks like mortality prediction or risk classification fall noticeably. The same pattern appears in large-scale web crawls. One analysis of the Multi-Way ccMatrix dataset—6.4 billion unique sentences across 90 languages—revealed that machine-generated translations, especially multi-way versions, dominate lower-resource content and introduce predictable noise. Models trained on this data produce less fluent output and more hallucinations because the underlying patterns are shallow and repetitive.

For technical documentation, the stakes rise further. Machine Learning Paper Certified Translation must preserve precise terminology—think “gradient descent,” “transformer attention heads,” or jurisdiction-specific regulatory phrasing—without introducing subtle shifts that later corrupt fine-tuning runs. When accuracy slips here, downstream models inherit the distortion at scale.

The chart above maps the stark reality: English and a handful of high-resource languages command massive corpora, while low-resource languages cluster at the bottom with tiny datasets relative to speaker populations. Models trained on this imbalance simply cannot reason equally across markets.

Human-in-the-loop translation for data annotation closes that gap where automation alone breaks down. Pure machine translation handles surface-level word swaps reasonably well, but it routinely misses idioms, domain-specific intent, sarcasm in user reviews, or cultural references that change meaning entirely. Human experts step in at critical checkpoints—reviewing pre-annotated batches, flagging edge cases, and feeding corrections back into the loop.

Field data backs the approach. Teams using structured human-in-the-loop annotation maintain 87 % accuracy while cutting costs by as much as 62 % and reducing project time by a factor of three. The process also surfaces bias early: annotators catch when a sentiment label flips because of an untranslatable cultural cue or when a medical term loses precision across languages. The result is cleaner training sets that generalize instead of overfitting to English-centric patterns.

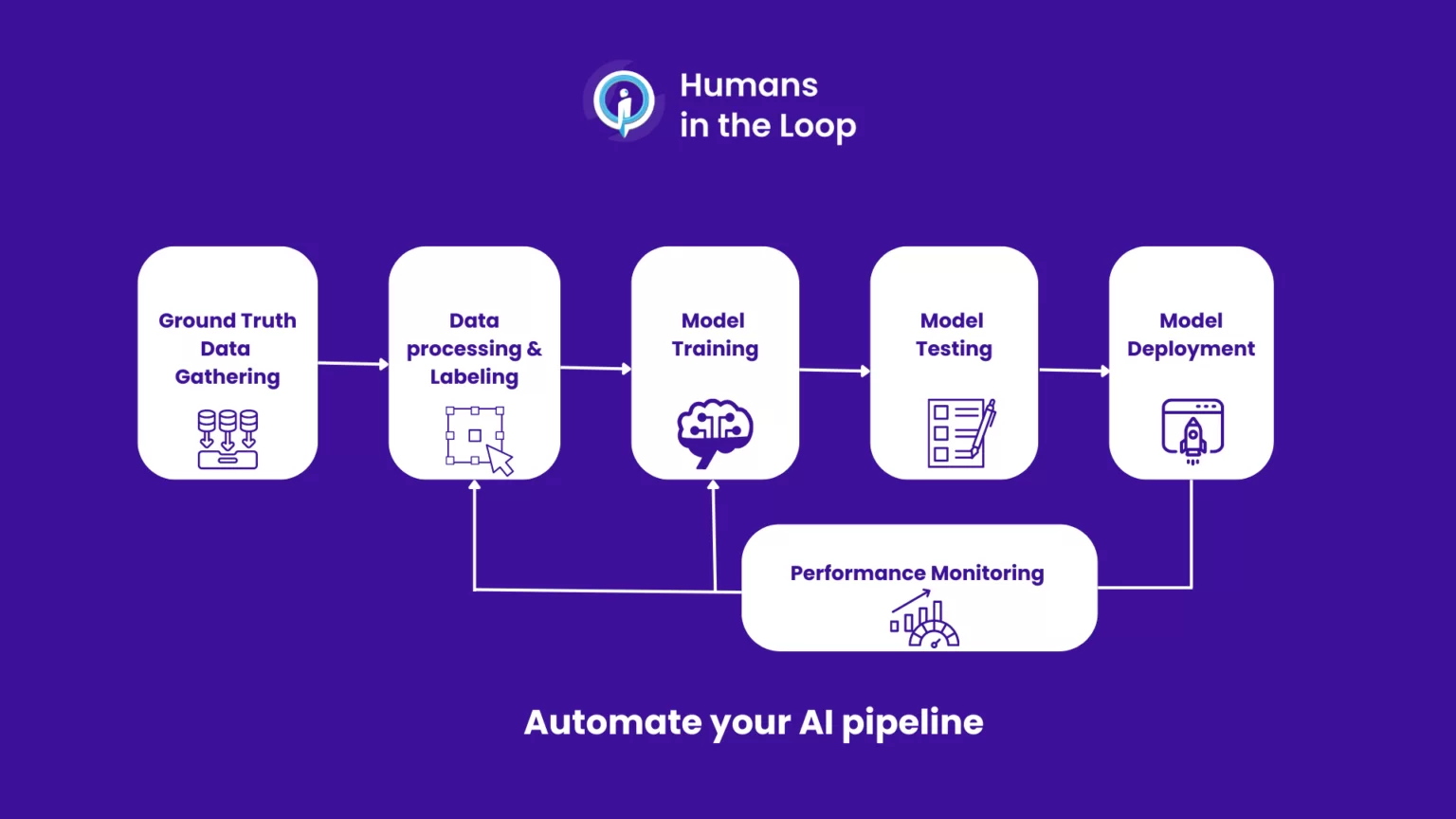

The diagram illustrates a typical human-in-the-loop pipeline: ground-truth collection flows into AI-assisted labeling, human review, model training, testing, and continuous monitoring. Each human touchpoint prevents error cascades that pure automation cannot detect.

Scaling datasets across languages without losing context demands disciplined protocols. Simply running everything through an off-the-shelf translator and calling it “multilingual data” creates the very problems B2B teams pay to avoid. Low-resource languages already suffer from underrepresentation—English plus the top ten languages account for roughly 82 % of online content—leaving the rest starved for quality examples.

Safe scaling therefore combines three non-negotiables:

Certified translation workflows that lock in terminology glossaries and style guides before any bulk processing.

Human validation layers at key volume thresholds to preserve semantic fidelity.

Strict data-security protocols—encrypted pipelines, role-based access, and audit trails—because training data often contains proprietary IP or regulated customer information.

Without these controls, context leaks: a legal clause might lose its binding force, a product description might imply the wrong safety standard, or a support ticket might misclassify urgency. The models that follow inherit those distortions at every inference.

The companies winning in multilingual AI treat translation not as a cost center but as core infrastructure. They invest in expert partners who understand both the linguistic subtleties and the data-security demands of enterprise deployments. Artlangs Translation stands out in this space. Proficient in more than 230 languages, the team has spent years specializing in translation services, video localization, short drama subtitle localization, game localization, multilingual dubbing for short dramas and audiobooks, and—crucially—multilingual data annotation and transcription. Their portfolio of enterprise cases shows exactly how human expertise layered into the translation pipeline turns raw multilingual volume into reliable, bias-resistant training assets that power production models without surprises.

When the next global rollout depends on your data pipeline performing equally well in Tokyo, São Paulo, and Berlin, the difference between good enough and production-grade comes down to how carefully that data was translated, annotated, and secured from the start.